I've been tracking technology trends professionally for over a decade: as an engineering leader, as a technology advisor for PE/VC firms, and as a top-1% expert network contributor. Along the way, I grew frustrated with the tools available for staying current. So I built my own.

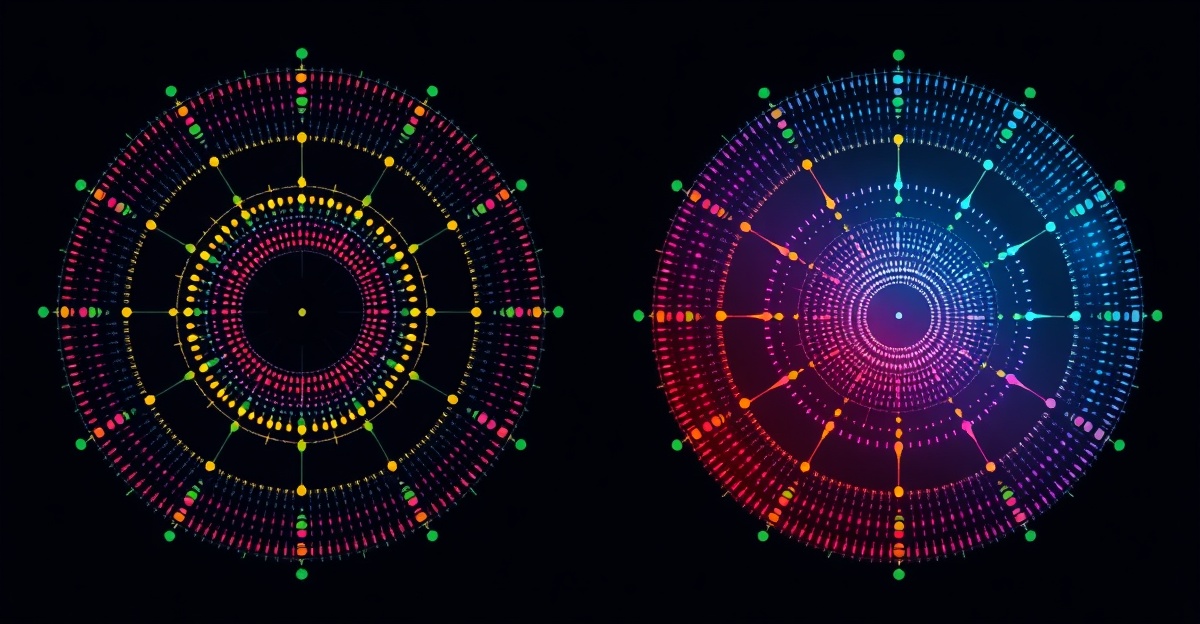

This is the story of why I created a data-driven, weekly technology radar and what I learned from the process.

Key Takeaways

- The Problem — Semi-annual advisory board radars publish too slowly for fast-moving technology decisions. By the time a trend appears in a bi-annual report, early adopters have already moved.

- The Signal — Expert network call logistics — what PE/VC firms are asking about — serve as a 6-12 month leading indicator on technology adoption. This signal isn't available in any public radar.

- The Approach — Four quantitative data sources (Google Trends, GitHub, search volume, expert mentions) combined with transparent, published weights. No black boxes, no editorial override.

- Where to Find the Data — The full radar with all 400+ tools, scoring, and structured comparisons lives on wetheflywheel.com. This page explains the thinking behind it.

The Problem with Semi-Annual Radars

The ThoughtWorks Technology Radar is an excellent product. Since 2010, it has helped thousands of enterprise teams make better technology decisions. I respect what they've built.

But it publishes twice a year. In the six months between editions, entire tool categories can shift. AI frameworks rise and fall. DevOps tooling consolidates. A new database appears, gains traction, and starts winning enterprise deals — all before the next advisory board sits down.

In my advisory work, I was constantly answering questions like "Is Tool X gaining real traction or is it just hype?" and "Should we evaluate Tool Y for our portfolio company?" I needed data, not a six-month-old opinion. I needed weekly signals, not semi-annual snapshots.

My Perspective: Expert Network + PE/VC Advisor

I have an unusual vantage point. As a top-1% expert network contributor across Tegus/AlphaSense, Office Hours, Third Bridge, Arbolus, Capvision, and Guidepoint, I participate in hundreds of technology advisory calls annually. These calls are for PE/VC firms — Bain Capital, Berenberg, McKinsey — evaluating technology investments and market positions.

What I noticed: the technologies that PE/VC firms start asking about consistently show up in mainstream adoption 6-12 months later. When three different firms ask me about the same tool in a single quarter, that's a leading indicator the public market hasn't priced in yet.

This signal, what investors are evaluating before the market catches on, doesn't exist in any traditional technology evaluation framework. ThoughtWorks reflects practitioner experience (what's working in projects). Gartner and Forrester reflect analyst opinion (who's a market leader, which vendor scores highest). None of them capture the investor signal, and all of them publish too slowly for the pace of technology change.

Why Quantitative + Weekly

I decided to build a radar that combines four measurable signals, refreshed every Monday:

- Google Trends (25%) — Search interest captures public awareness and mindshare

- GitHub activity (25%) — Stars, commits, contributors show developer momentum

- Expert network mentions (30%) — What PE/VC firms are asking about (the leading indicator)

- Search volume (20%) — Monthly search data shows market interest depth

The weekly cadence matters. Technology decisions don't happen on a semi-annual cycle. A startup CTO choosing their data stack needs current signals. A VC evaluating a Series B needs this week's momentum, not last October's advisory board consensus.

I've seen this play out repeatedly. Tools that showed sustained momentum across multiple signals, rising Google Trends, increasing GitHub activity, growing expert network mentions, almost always translated into real adoption. Single-source spikes (a viral tweet driving GitHub stars, a launch event inflating search interest) were reliably noise. The multi-signal approach filters hype from genuine traction.

Building a Transparent Scoring Algorithm

One of my biggest frustrations with existing radars is opacity. ThoughtWorks describes their advisory board process, but you can't reproduce their scoring. Gartner's methodology is proprietary. You're trusting a black box.

I made a deliberate design choice: every aspect of the scoring algorithm is public. The weights are published. The normalization approach is documented. The movement classification thresholds are transparent. If you disagree with a tool's score, you can trace exactly why it scored the way it did.

This transparency has a practical benefit beyond trust. When I present radar data in advisory calls, clients can interrogate the methodology. "Why is Tool X scored higher than Tool Y?" has a data-backed answer, not "because the board felt it was more interesting."

The full technical methodology (normalization formulas, EWMA smoothing, hysteresis rules, pipeline architecture) is documented on the WTF Technology Radar methodology page.

Where to Find the Data

The complete radar lives on wetheflywheel.com: all 400+ tools, weekly scores, movement history, and the full scoring methodology. That's the data product.

Here on prommer.net, I publish weekly commentary, my interpretation of what the data means, which signals matter, and what tech leaders should pay attention to. The WTF Radar answers "what the data shows"; I answer "why it's happening and what to do about it."

For detailed feature-by-feature comparisons, including comparison tables, pros/cons, and structured verdicts, see the comparison guides on wetheflywheel.com:

- WTF Radar vs ThoughtWorks Technology Radar

- WTF Radar vs Gartner Magic Quadrant

- WTF Radar vs Forrester Wave

- All Four Frameworks Compared

Questions About the Radar

Why did you build your own technology radar instead of using ThoughtWorks?

ThoughtWorks publishes twice a year based on advisory board consensus. In my work advising PE/VC firms on technology due diligence, I needed weekly signals — not semi-annual snapshots. I also wanted quantitative data I could point to, not just 'the board thinks X is interesting.' So I built a system that combines four measurable data sources with a transparent scoring algorithm.

Isn't the ThoughtWorks radar good enough for most teams?

For enterprise architecture planning on 6-12 month horizons, absolutely. ThoughtWorks has 15+ years of credibility and their advisory board brings deep implementation experience. But if you're making fast-moving decisions — evaluating tools for a startup stack, conducting VC due diligence, or tracking competitive landscapes — you need something faster and more data-driven.

What makes the expert network signal special?

As a top-1% contributor across six expert networks, I participate in hundreds of technology advisory calls annually for PE/VC firms. When I see the same technology come up repeatedly in call requests, it means investors and strategic buyers are actively evaluating it. This signal consistently leads public adoption trends by 6-12 months — it's the most valuable input in the scoring algorithm.

How transparent is the scoring methodology?

Completely. The weights are published (Google Trends 25%, GitHub 25%, Expert Network 30%, Search Volume 20%), the normalization approach is documented, and the movement classification thresholds are public. Anyone can understand why a tool scored the way it did. This is a deliberate design choice — the ThoughtWorks process is described but not reproducible.

Where can I see the full radar data and structured comparison?

The complete radar with all 400+ tools, weekly scores, and movement history is at wetheflywheel.com/en/radar/. For a detailed feature-by-feature comparison with ThoughtWorks, see wetheflywheel.com/en/guides/wtf-radar-vs-thoughtworks-technology-radar/.

Frequently Asked Questions

Why did you build your own technology radar instead of using ThoughtWorks?

ThoughtWorks publishes twice a year based on advisory board consensus. In my work advising PE/VC firms on technology due diligence, I needed weekly signals — not semi-annual snapshots. I also wanted quantitative data I could point to, not just 'the board thinks X is interesting.' So I built a system that combines four measurable data sources with a transparent scoring algorithm.

Isn't the ThoughtWorks radar good enough for most teams?

For enterprise architecture planning on 6-12 month horizons, absolutely. ThoughtWorks has 15+ years of credibility and their advisory board brings deep implementation experience. But if you're making fast-moving decisions — evaluating tools for a startup stack, conducting VC due diligence, or tracking competitive landscapes — you need something faster and more data-driven.

What makes the expert network signal special?

As a top-1% contributor across six expert networks, I participate in hundreds of technology advisory calls annually for PE/VC firms. When I see the same technology come up repeatedly in call requests, it means investors and strategic buyers are actively evaluating it. This signal consistently leads public adoption trends by 6-12 months — it's the most valuable input in the scoring algorithm.

How transparent is the scoring methodology?

Completely. The weights are published (Google Trends 25%, GitHub 25%, Expert Network 30%, Search Volume 20%), the normalization approach is documented, and the movement classification thresholds are public. Anyone can understand why a tool scored the way it did. This is a deliberate design choice — the ThoughtWorks process is described but not reproducible.

Where can I see the full radar data and structured comparison?

The complete radar with all 400+ tools, weekly scores, and movement history is at wetheflywheel.com/en/radar/. For a detailed feature-by-feature comparison with ThoughtWorks, see wetheflywheel.com/en/guides/wtf-radar-vs-thoughtworks-technology-radar/.

Need Expert Technology Guidance?

20+ years leading technology transformations. Get a technology executive's perspective on your biggest challenges.