Key Takeaways

- Pattern one: graph-based — Use when explicit state transitions, deterministic resumability, and audit traceability are non-negotiable. LangGraph is the default; AutoGen v0.4 inside Microsoft estates.

- Pattern two: durable-execution — Use when the workflow must survive restarts, run for hours or days, and integrate with existing backend infrastructure. Temporal for the heavy case; Mastra or Inngest for the TypeScript-first case.

- Pattern three: role-based — Use when the value of fast iteration outweighs the cost of harder production hardening. CrewAI and the OpenAI Agents SDK both fit; both need supplementing for scale.

- The four debts that override all three — Process, data, technical, cultural. Per the HBR/Deloitte 2026 paper, these account for the majority of agentic project cancellations. The framework cannot fix them.

I have built an orchestration layer three times now

Microservices in the mid-2010s. API gateways in the late 2010s. AI agents in the 2020s. Each one looked like a different problem at first; each one collapsed into the same handful of questions about state, durability, observability, and human oversight. The marketing was different each time. The patterns rhymed.

This piece is the architectural-pattern view of agent orchestration as it has actually settled in production by mid-2026. It pairs with the framework-level selection grade at wetheflywheel.com/en/guides/best-agent-orchestration-frameworks-2026/. Read this one first to pick the family. Use the WTF guide to pick the specific framework.

I keep building the same orchestration patterns with different vocabulary. The patterns are stable. The frameworks are not.

The four debts that override pattern choice

Before naming the three patterns, the architectural caveat. The 2026 HBR Analytic Services white paper sponsored by Deloitte, Agentic AI: Orchestrating Intelligent Operations, cites a Gartner projection that 40 percent of agentic AI projects will be canceled by the end of 2027. The paper attributes most cancellations to four categories of organisational debt:

- Process debt. Workflows designed for human execution that cannot be run by agents without redesign.

- Data debt. Fragmented or inconsistent information that prevents reliable agent decision making.

- Technical debt. Legacy systems that do not integrate smoothly with orchestration layers.

- Cultural resistance. The friction that surfaces whenever human roles shift, even when the new arrangement is objectively better.

None of the three patterns below fix any of those four. A graph-based orchestration on top of fragmented data is still working against fragmented data. A durable-execution framework that calls into a legacy system the agents cannot read is still blocked by the legacy system. A role-based prototype that ships into an organisation hostile to AI-augmented work is still going to stall in the next operating review.

Framework choice is a useful second-order decision. It is not a first-order decision. If you can only do one thing for the agentic programme this quarter, do an honest debt audit before you spend a week comparing LangGraph and CrewAI feature matrices.

Pattern one: graph-based orchestration

The agent system is modelled as a state machine. Nodes are functions or agent calls; edges are transitions; the runtime executes the graph and persists state at checkpoints. The mental model is the same one you have used for distributed workflow design for the last fifteen years, with the difference that some of the nodes are LLM invocations rather than pure functions.

When it wins. When you need explicit control over what runs next, deterministic resumability, and a visible execution path you can show to auditors. Compliance-adjacent flows. Multi-turn reasoning where the state of the conversation needs to be inspectable. Anything where the failure mode you are most engineering around is loss of execution state mid-flow.

When it breaks. When the team treats the graph as a piece of code to be edited continuously rather than a structural commitment. Graph-based frameworks reward stable graphs and punish constant rewiring. The teams that get the most out of LangGraph treat the graph as roughly as load-bearing as their database schema; the teams that struggle treat it as another layer of business logic and refactor it weekly.

Frameworks worth knowing. LangGraph is the category default. AutoGen v0.4 is a credible second, particularly inside Microsoft estates where the OpenTelemetry-first observability story matches existing observability investments.

Pattern two: durable-execution

The agent system runs on top of a workflow engine. Steps are durable and retryable; the runtime survives process restarts and external failures; the agent loop is one kind of step among many. The pattern comes from the broader workflow-engine world (Temporal, Inngest) and predates agents by years. Several newer frameworks (Mastra, Letta) are built durable-first.

When it wins. Any workflow that has to run for more than an hour, must survive process restarts, or must integrate with several existing backends. Regulated industries where the audit trail of every step is a requirement, not a nice-to-have. Backend-heavy systems where the agent is one long-running concern among many. The failure mode this pattern engineers around is the workflow losing hours of progress to an unrelated restart.

When it breaks. When the engineering team has not adopted a workflow engine for non-agent reasons and is being asked to learn one in service of the agent project. The learning curve is real and is shaped less by agents than by the workflow-engine model itself. The teams that already operate Temporal for non-agent workloads adopt Temporal-for-AI easily; the teams that adopt Temporal specifically for agents pay the workflow-engine learning tax with the agent project as the lens.

Frameworks worth knowing. Temporal is the heavyweight. Inngest Agent Kit is the lighter TypeScript-first developer-experience play. Mastra and Letta are the two newer entrants that build the durable-execution machinery into a framework specifically designed for agents.

The durable-execution pattern is the most under-appreciated of the three. The first time a long-running workflow restarts mid-step, it pays for itself.

Pattern three: role-based

The agent system is modelled as a crew of agents with named roles, goals, and communication patterns. The runtime handles message passing between agents and agent-to-tool calls. The mental model is social rather than mechanical. The framework decides how the conversation routes.

When it wins. Prototypes. Task automation in the early adoption stage where the cost of getting a framework choice wrong is small. Demos where the executive audience needs to see the decomposition as a story rather than a state machine. Systems where the natural decomposition is genuinely social (planner, researcher, writer, critic) rather than a graph.

When it breaks. At scale. Role-based frameworks tend to make state durability and observability application concerns rather than framework concerns. The same property that makes them fast for prototypes makes them expensive to operate at autonomy levels two and three (per Vijayan's phased-autonomy framework: review every decision, spot-check, then exception-only). The teams that succeed with CrewAI tend to outgrow it within a year and then either layer durable-execution underneath or rewrite into a graph-based framework.

Frameworks worth knowing. CrewAI and the OpenAI Agents SDK are the two with serious adoption. Both have credible production stories now; both still leave more of the wiring to the application than the durable-execution alternatives.

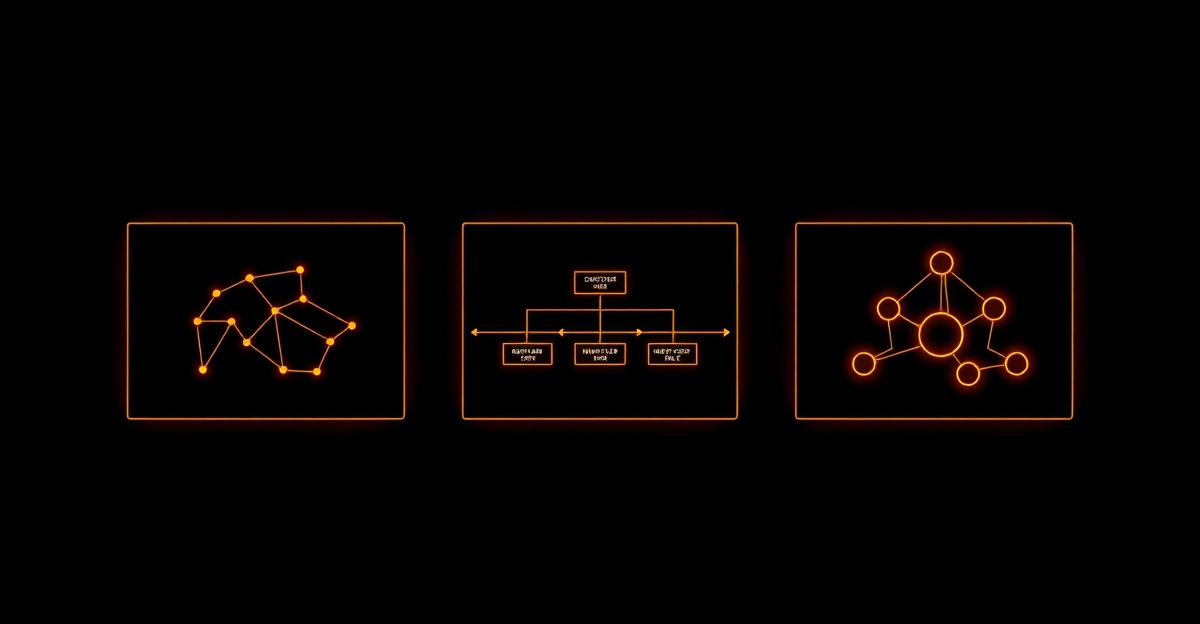

The three patterns at a glance

| Pattern | Failure mode engineered around | Representative frameworks | Sweet spot |

|---|---|---|---|

| Graph-based | Loss of execution state mid-flow | LangGraph, AutoGen v0.4 | Compliance-adjacent flows, multi-turn reasoning chains, agent-as-state-machine |

| Durable-execution | Process restart loses hours of work | Temporal, Mastra, Inngest, Letta | Long-running workflows, multi-system orchestration, regulated industries |

| Role-based | Building the abstraction layer before the value is proven | CrewAI, OpenAI Agents SDK | Prototypes, task automation at the start of the adoption curve, demo-driven development |

How I pick in practice

The selection conversation usually takes ninety minutes if the team is honest about the failure mode that matters most. The questions, in order:

- What is the longest the workflow needs to run? If hours-to-days, durable-execution is doing more work than the alternatives.

- If the process running the workflow restarts, what happens? If the answer involves losing progress that cannot be cheaply re-derived, durable-execution again.

- Is the system going to be audited? If yes, graph-based unless there is a specific reason not.

- How much state needs to be inspectable mid-flow? If a lot, graph-based. If only at the boundaries, the role-based options are fine.

- Is this the first agent system your team has shipped? If yes, lean role-based for the prototype; commit to migrating once the system is in production for ninety days.

The combinations that come up most often in production are graph-based on top of durable-execution (LangGraph nodes inside a Temporal workflow), or role-based for the first six months replaced by graph-based once the topology stabilises. Pure single-pattern systems are common in prototypes and rare in mature production stacks.

The economics question

Two notes on cost that the framework discussion routinely under-weights.

The first, surfaced sharply in the HBR/Deloitte paper, is that token economics breaks classic total-cost-of-ownership frameworks. Every agent interaction consumes tokens. Tokens carry trackable cost. Total spend scales nonlinearly with usage in a way licenses and seats do not. The pricing-model fit between your framework and your workload is a financial constraint, not a procurement nicety. Frameworks priced per execution or per token align with the workload. Frameworks priced per seat or per fixed deliverable will create an argument with finance that the technology cannot win.

The second, from Alex Bakker at ISG Research interviewed in the same paper, is the emergence of autonomy-level pricing: predefined contract tiers established at the start of a multiyear engagement that give providers incentives to pursue automation without requiring a change order every time. The mechanism is still rare. The direction is clear. The framework discussion should include an explicit conversation about how the pricing model behaves at autonomy levels two and three, not only at level one.

Related on the network

What I would tell a CTO starting today

Three things, in order of weight.

- Do the four-debt audit before the framework selection. Process, data, technical, cultural. If three or more of those are red, the framework discussion is premature and the work that pays back is debt reduction.

- Pick the pattern by failure mode. Not by feature matrix, not by the loudest marketing. The failure mode you most need to engineer around picks the pattern with high reliability. The framework choice within the pattern matters less.

- Plan for the pattern to outlast the framework. LangGraph, Temporal, CrewAI, OpenAI Agents SDK. All of them will look different in eighteen months. The pattern will not. Architect around the pattern; choose the framework as the current best implementation of that pattern; expect to swap the framework once or twice over the lifespan of the system. That is the same pattern as microservices runtimes and API gateways. It will be the same pattern for agent orchestration.

Why these three patterns and not five or six?

Because three is what the field has actually settled on. There are more frameworks than patterns. LangGraph and AutoGen v0.4 are different products with the same architectural shape. Mastra and Letta are different durable-execution implementations of the same pattern. The vendor proliferation is real; the pattern landscape is small.

How do you pick between patterns in a new project?

Start from the failure mode that would hurt most. If losing state mid-flow is the failure that matters most, pick graph-based. If the workflow has to survive process restarts and run for days, pick durable-execution. If the value of fast iteration outweighs production-readiness, pick role-based. I have done this three times now and the failure-mode question is what reliably picks the right answer.

How does this map to the WTF orchestration frameworks guide?

That guide ranks the ten leading frameworks across twelve axes including state durability, observability, language support, and pricing-model fit. It is the operational selection grade. This piece is the architectural-pattern view that sits one level above it. The two pieces are designed to be read together: read this first to pick the family, then go to the WTF guide to pick the specific framework. The link is at wetheflywheel.com/en/guides/best-agent-orchestration-frameworks-2026/.

What is the HBR/Deloitte four debts framework?

A diagnostic framework from the 2026 HBR Analytic Services paper sponsored by Deloitte ("Agentic AI: Orchestrating Intelligent Operations"). It names four categories of organisational debt that block agentic AI from working at scale: process debt (workflows designed for humans cannot be executed by agents without redesign), data debt (fragmented or inconsistent information that prevents reliable decision making), technical debt (legacy systems that do not integrate with orchestration layers), and cultural resistance (friction whenever human roles shift). The paper cites Gartner projecting that 40% of agentic AI projects will cancel by end of 2027, and attributes most of those cancellations to these four debts rather than to framework choice.

You mention building orchestration layers three times. What were the previous two?

Microservices in the mid-2010s, when the question was how to coordinate dozens of services that each owned a slice of a domain. API gateways in the late 2010s, when the question shifted from coordination to mediation: rate limits, auth, and a single point of policy for traffic going to the services. Agents are the third generation. The questions rhyme: state, durability, observability, human-in-the-loop. The vocabulary is different but the architectural shape has more in common with the previous two waves than the marketing decks let on.

Does the pattern choice still matter if the four debts dominate outcomes?

Yes, but not as much as the marketing implies. The pattern choice matters when the four debts are cleared or being actively worked on. When the debts dominate (process designed for humans, data fragmented, legacy systems unreachable, culture resistant) the framework you pick has almost no effect on whether the project succeeds. The first investment is in the organisational layer. The framework discussion is the second investment.

What is the most under-appreciated pattern?

Durable-execution, by a margin. The graph-based and role-based options dominate the discussion because LangGraph and CrewAI have the loudest marketing. Durable-execution wins more production deployments than the discussion suggests, because once a workflow has to survive a restart or run for more than an hour, the durability question becomes the binding constraint. Temporal-for-AI in particular is in more production agent stacks than its marketing presence would predict.

Ready to Transform Your AI Strategy?

Get personalized guidance from someone who's led AI initiatives at Adidas, Sweetgreen, and 50+ Fortune 500 projects.